Instagram policy change means it can delete rule-breaking accounts faster

The company announced a policy change that allows the service to delete accounts that break too many of its rules during a set period of time. Up until now, Instagram only removed accounts that had "a certain percentage of violating content."

That percentage rule — the company doesn't disclose the exact percentage — is still in effect, but now there's a new rule that will allow Instagram to take down accounts that break a lot of rules in a shorter period of time.

The change comes as the company faces criticism for its inability to block graphic images of a teen's dead body on its platform. Though Instagram says it has used image-blocking tech to prevent the photos from spreading, the platform hasn't been able to catch everything, as my Mashable colleague Morgan Sung earlier reported. Instagram said it disabled many anonymous accounts responsible for the continued sharing.

Additionally, Instagram now says it will send warnings to people whose accounts are in danger of being deleted for breaking too many of its rules. The app will alert users who have had posts removed for rule-breaking, and will let them know if an account deletion is imminent.

Previously, users could have their accounts deleted with no warning, and without necessarily understanding what they had done wrong. Some people whose accounts were deleted assumed they had been hacked, since their account disappeared with no warning.

However, there's one common scenario where these warnings will not apply: accounts that are disabled for trademark or copyright violations. That process, which is a sore spot for many accounts that post viral videos, will remain separate, according to an Instagram spokesperson.

Source: mashable.com

Source: David Apinga

Trending News

Housing Project: President Mahama launches Oxygen City in Volta, reaffirms commitment to equitable regional development

23:20

Citizen Ato Dadzie praises Lordina Mahama's humility

16:05

Yoruba Kingdom honours Prez. Mahama for global leadership

15:28

Midwifery hackathon aims to reduce maternal mortality in Ghana

15:32

KAA 2028 campaign denounces false claims of endorsing President Mahama

15:15

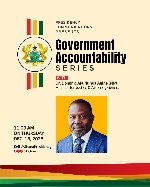

'Government Accountability Series': Attorney General, Justice Minister speaks tomorrow Dec 18

20:02

Asantehene arrives in Accra to present Bawku conflict mediation report to President Mahama

15:52

Coalition of Unpaid Nurses demands immediate payment of arrears, threaten strike

15:11

Namibian Parliamentary delegation visits Ghana’s National Petroleum Authority for study tour

15:11

Ablekuma West MP begins local production of pavement blocks ahead of community road paving project

15:02